(This is the second of two blog posts.)

In the first post here, I outlined how document management systems such as Sharepoint are really only as good as the structures set up to organize and store the data. Indeed, even assuming good folder structure, there is still the question of what lies within the documents. I finished the first post posing this question:

“What remains hidden is the content within the documents. Is it really any good?”

In other words, does the below ‘sea level’ doc content have acceptable quality? So, let’s now consider how ‘discovery’ and ‘concept mining’ can help in determining the answer.

Discovery

When reviewing a document, we tend to read it start to end. Under tight time constraints, you might opt to read the first few pages and skim the remainder. This approach to ‘discovery’ is error prone when dealing with voluminous documents in short time frames. You can’t assume every reviewer has equal capability or necessary domain expertise.

Other options for document discovery are possible. Let’s consider one approach

If we identify particular terms in their textual context along with frequency, you get a surprisingly rich sense of what a document’s themes are. Academics call this notion ‘concordance’. To give a concrete example, in the king James bible, ‘Lord’ occurs 7829 times, ‘God’ 4443 times. It might be fair to infer a theme here.

In 2008, the NY Times used concordance in a very visually rich way. Check out the link here for more.

They published an analysis of the state of the union address between 2000 and 2007. Interestingly, ‘IRAQ’ started at zero in 2000, tipping past 20 references in 2003 and onwards. Visual concordance is useful to identify textual themes.

‘Discovery’ using VisibleThread is similarly rich. In the case of VisibleThread, we are interested in analyzing sets of MS Office (Word, Excel) or pdf documents. Other than that, it has much the same intent as the NY Times work.

VisibleThread presents a breakdown of most frequent nouns correlated with section heading and textual extract. This reveals intent and themes within collections of documents. The below shows how this works for an arbitrary SOW (Statement of Work) issued by the department of homeland security.

In the screenshot, the list on the left shows the most frequently occurring nouns in the document.

The color coding allows us to correlate the noun to specific section headings in the SOW document. The section headings display to the right of the list in a structure view.

To the far right, a heat map shows term distribution. The specific text extracts containing the identified term are called out in the bottom pane.

We can see pretty clearly from the example, that this is an IT oriented SOW, with ‘IP’ showing 16 occurrences. Further, all 16 instances are confined to the ‘Specific Tasks’ section of the document.

More interestingly sometimes can be the absence of terms, revealing completeness issues. In the example above, we see only 1 instance of CIDR. Now that is worth tracking down! We may well have lack of clarity around the intent or meaning of CIDR as indicated by an ‘orphan’ or ‘one-off’ reference such as this. Sorting by low to high frequency can often reveal such gaps and incompleteness. This might apply to both the response doc or the issued doc. In the latter case, a natural opportunity to feed back into and help ‘shape’ the RFP might arise based on these insights.

So, on the one hand, intent is high-lighted with high frequency; on the other, lack of quality (or completeness) is flagged with low-frequency

Concept mining and concept lists

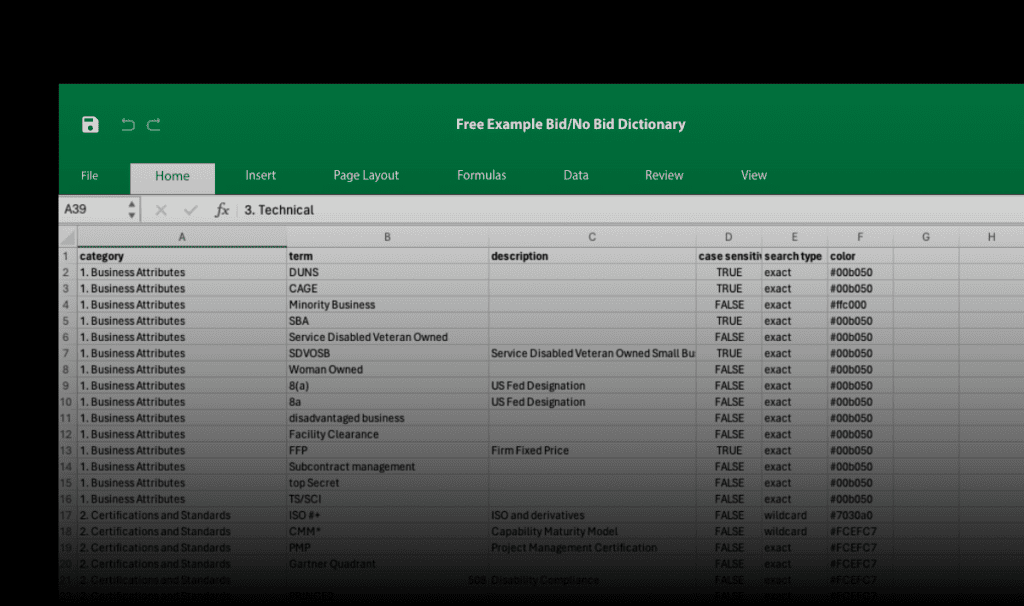

Let’s take this a step further. Up to now, we have discovered document content by analyzing a document or collection of documents. This is based on automatically calculated metrics for noun frequency and distribution. Consider the reverse. What if we had a collection of expected themes expressed as a ‘concept list’, could we spot deficiency in a document?

If we are authoring a response to an RFP for example, we may want to ‘push’ certain language in certain sections. As a Bid Manager today, I would package these concepts into ‘win themes’. Can we ‘inject’ these into the document and flag absence? As it turns out, it’s quite feasible. Let’s see how.

We can create a set of ‘win statements’ as a VisibleThread’ concept list. The concept list collects language that we want to reinforce. Think of it as collections of theme language packaged into groups. Each group corresponding to a specific theme. If we ensure a liberal sprinkling of theme language in the right sections of the response, this will increase the probability of a better score. How does it work?

We scan the document content looking for the theme language, cross-cutting by section. Quite similar to what we did for discovery.

In the example, we see a number of win themes represented and the ‘hits’ occurring within a response document.

One such theme is ‘Theme 5: Delivering improved customer service’ comprising terms speaking to that theme.

We can clearly see a lack of appropriate distribution of key theme phrases; ’empowering’, ‘informed’ and ‘decisions’. Gray colorization indicates the absence of hits. So, we see the sections where theme language re-enforcement is required.

As you can see, dipping below ‘sea level’ can be very revealing. Once you cross-cut by section heading, analysis and metrics can be tremendously powerful. Document Content Analysis includes these techniques of discovery and concept mining. I hope you can see how this is far removed from Content Management. Really we are diving inside the document content to assess quality.

These are not the only techniques to assess quality below ‘sea-level’, others exist; structure analysis, word count analysis, passive language analysis to name a few. All have a role to play in determining content quality. I’ll aim to cover some others in future posts.

In the meantime, I hope the above examples might help you create better quality documents.