Unless you’ve been hiding under a rock, you’ve heard of ChatGPT and AI (Artificial Intelligence). You may have even heard of LLMs(Large Language Models). Since we’re a Language Analysis Platform, people are looking for our take.

Here’s the type of questions we hear:

- Do you use AI and what impact does AI have on you?

- Will ChatGPT make parts or all of VT redundant?

- Are you planning on integrating ChatGPT into VT?

- the list goes on…

These come from customers, prospects, and investors alike.

First off, we’re hugely excited by the possibilities that GPT type AI offers. But like all great “leap-forwards”, there are some nuances and considerations to keep in mind too. Here are the biggest two we see:

- How can you be sure the answers ChatGPT generates are accurate and not hallucinations?

- How can you be sure that confidential data does not make it into the training data? Or be exposed to hacks. We’ve seen OpenAI suffer a number of data breaches, which might give pause.

When we “chat” (forgive the pun 😊) with customers like Google, Verizon, and Boeing, we’re finding the above two topics come up frequently too. Pretty much every CIO (Chief Information Officer) in every enterprise is actively considering what this new type of AI means for them. Positives and negatives.

TL;DR: our view: this stuff is powerful. It just needs to be handled with care.

Now, before we dive deeper, let’s get our terminology straight. What is AI, Generative AI, ChatGPT? And what are LLMs?

What is AI?

Sounds like a simple question, but I’m always amazed at how many people don’t know what AI actually is (even those waxing philosophic about ChatGPT). So, let’s start with that.

Traditional software uses algorithms, essentially a specific set of instructions. If I run an algorithm 100 times on the same data, then I’ll get the same answer every time. Nothing changes.

AI is where you have a model that evolves over time trained on feedback or inputs. So, it’s unlike traditional software in that sense. This idea of a “feedback loop” is core to AI. Models tune over time, and generally get better with more feedback. Netflix is a great example of AI in action. Here, the model learns your preferences based on your viewing behavior. If I binge watch “Fast and Furious” (big fan of Dwayne 😊), then I’m unlikely to see “Peppa Pig” showing in my suggestion list. This is AI learning my preferences and adjusting the model. So, these systems evolve over time based on feedback.

Now here’s the thing, AI is not new. It dates back to work done in the 1950s. Amazon, Google and Self-driving cars all use copious amounts of AI. And we at VisibleThread use a healthy dose of AI in our products too. In fact, in 2013 (how time flies!) we added AI into VT Docs to power our early “Discovery” capability. We also use another form of AI, NLP (Natural Language Processing) in both VT Docs and VT Writer to identify language structure, Parts of Speech etc.

But what about ChatGPT, what is it? and what the heck is an LLM?

This latest evolution of AI actually started in 2017, when Google published a research paper. In it, they introduced a new type of AI model called the Transformer Model. This model generates higher quality content over previous approaches. Many refer to it as Generative AI.

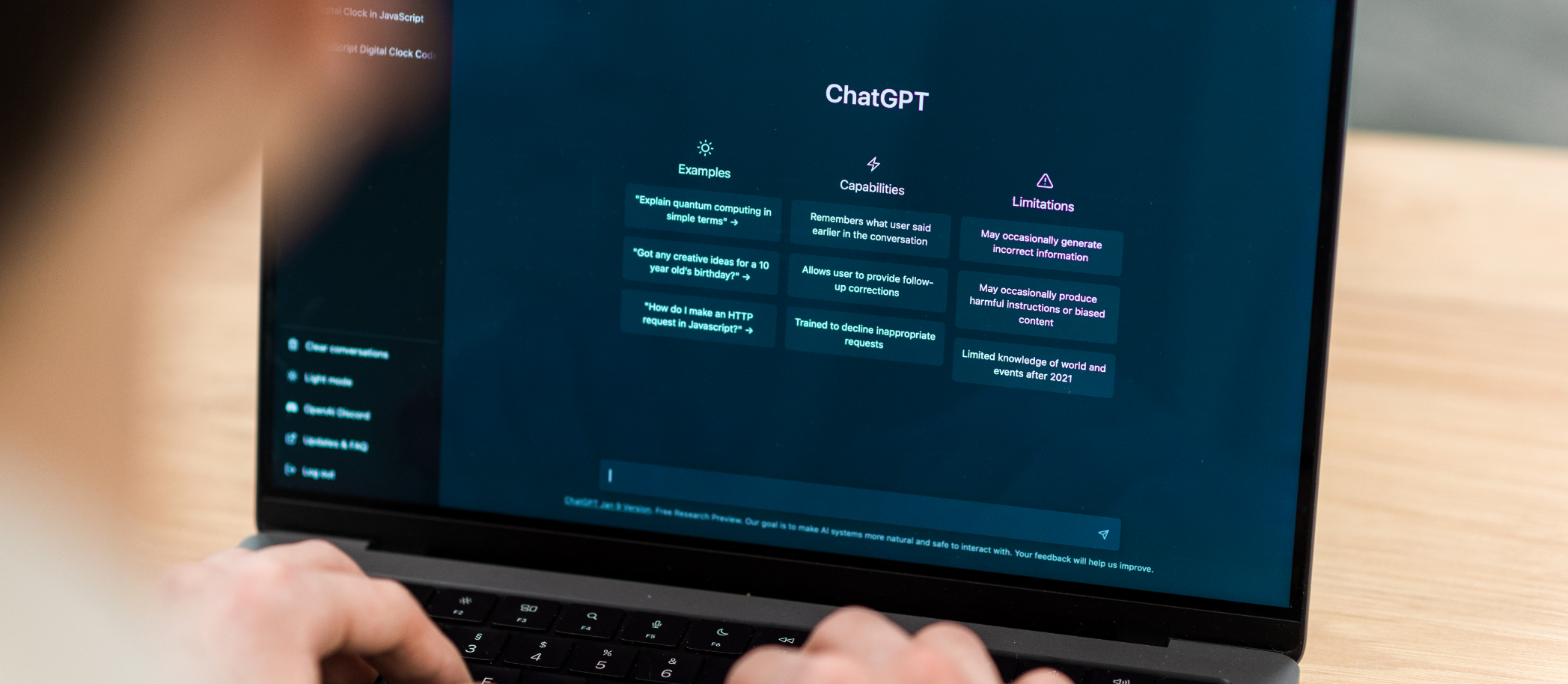

OpenAI doubled down on the transformer model, culminating with the release of the app ChatGPT in November 2022. It was ChatGPT that really created a stir because it was so easy to use for a non-technical audience. ChatGPT’s user interface is reminiscent of the first (and still current) google search engine interface, with a very simple “Ask me anything” type entry field.

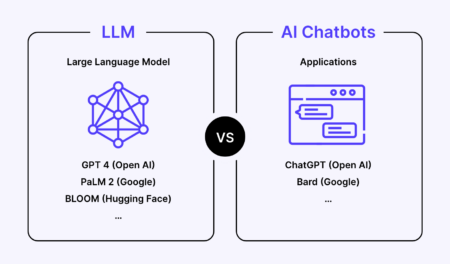

Now underpinning ChatGPT is an LLM (Large Language Model) called GPT. In case you’re wondering, the “T” in GPT stands for “Transformer”. And while we’re at it, the acronym GPT stands for “Generative Pre-trained Transformer”. Using a car analogy, ChatGPT is the car, the LLM is the engine.

And new LLMs are popping up almost on a weekly basis from various sources. You might say it’s fast and furious! (Dwayne again, sorry, couldn’t resist that!). Here’s a useful way to visualize it.

Anyhow, that’s the lay of the land. What’s our position?

1. LLMs and Hallucinations, a reality check

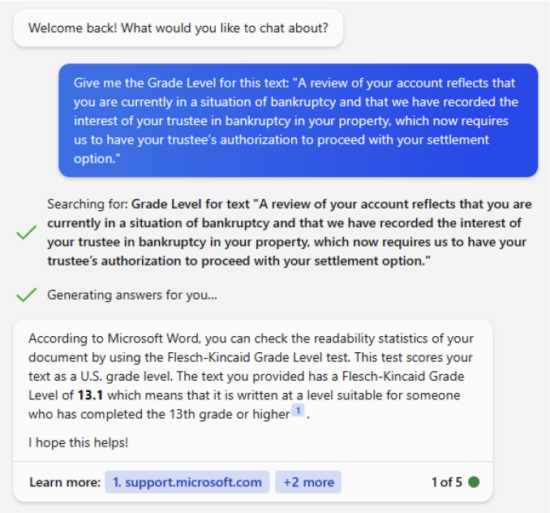

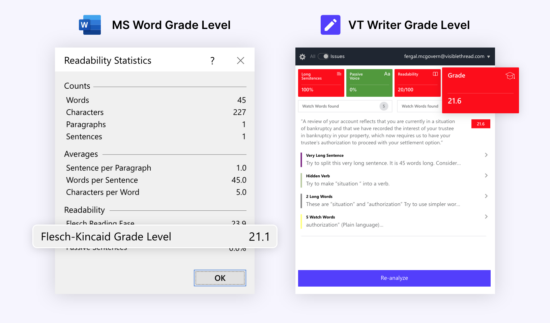

If we ask Bing chat (powered by GPT) this question:

Give me the Grade Level for this text: “A review of your account reflects that you are currently in a situation of bankruptcy and that we have recorded the interest of your trustee in bankruptcy in your property, which now requires us to have your trustee’s authorization to proceed with your settlement option.”

It confidently answers 13.1

But guess what, it’s wrong! And not just a little bit off, it’s way off.

MS Word’s own calculation shows this text at the correct grade level of 21. And VT Writer also scores this at grade level 21.

Hmmm, 21 vs. 13, that’s a whole 8 grade levels of difference, that’s a big hallucination!

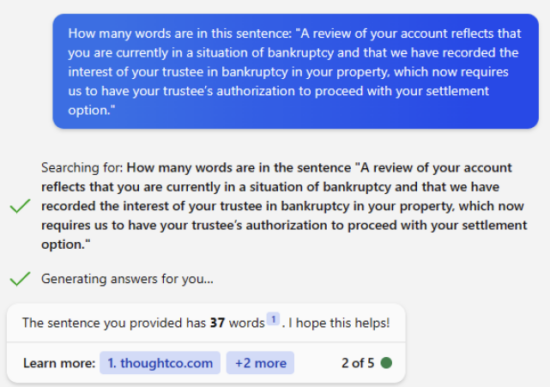

Ok, maybe we’re expecting too much from the LLM. Let’s ask a simpler question. Let’s just ask it how many words are in that sentence? How hard can that be?

The LLM’s answer is 37. The real answer is 45.

37 vs. 45, another big miss! And just like our prior example, both MS Word and VT Writer get it right.

Seems like LLMs may not be so good at math!

The point of these examples is not to say LLMs are rubbish, rather they are great at certain things, and not so good at others. You might say that their maths skills are a little dicey, but their creative skills are pretty good. Each LLM depending on its training data may be better or worse than these examples.

Using non-AI algorithmic based software like MS Word and VT Writer to calculate something like words in a sentence gives consistent results 100% of the time. Generative AI by its very nature cannot guarantee accuracy or consistency for this type of task. This is important and brings us to our second point.

2. LLMs & Generative AI, good and bad use cases

Commercial products deliver value for their customers using traditional algorithmic approaches and frequently without any AI. For example, pretty much every product will have some kind of database component in it. For storing and retrieving data. Standard algorithms that yield the same result every time at play there. And that’s a very good thing. Imagine a medical device which gave a slightly different result every time!

But I’m not saying LLMs are useless. As we saw in the last section, they’re just not suitable for certain types of tasks. So, where do they shine? Let’s look at where vendors are adding them to their products.

The messaging app Intercom announced a new ChatBot called Fin based on GPT-4 which augments their customer service platform to better answer customer questions. Microsoft, a part owner of OpenAI announced how they would embed GPT capabilities into Office 365 to make creating content better and easier. Google are in the mix too, pushing capability from their LLM PaLM2 into Google Workspace. Adobe released a Generative AI empowered version of Photoshop. These use cases are harnessing the generative power of LLMs, helping summarize content, helping structure content, helping edit imagery. LLMs are very good at this type of thing.

And at VisibleThread, we see lots of areas where we can harness LLMs too, both in VT Docs and VT Writer/VT Insights.

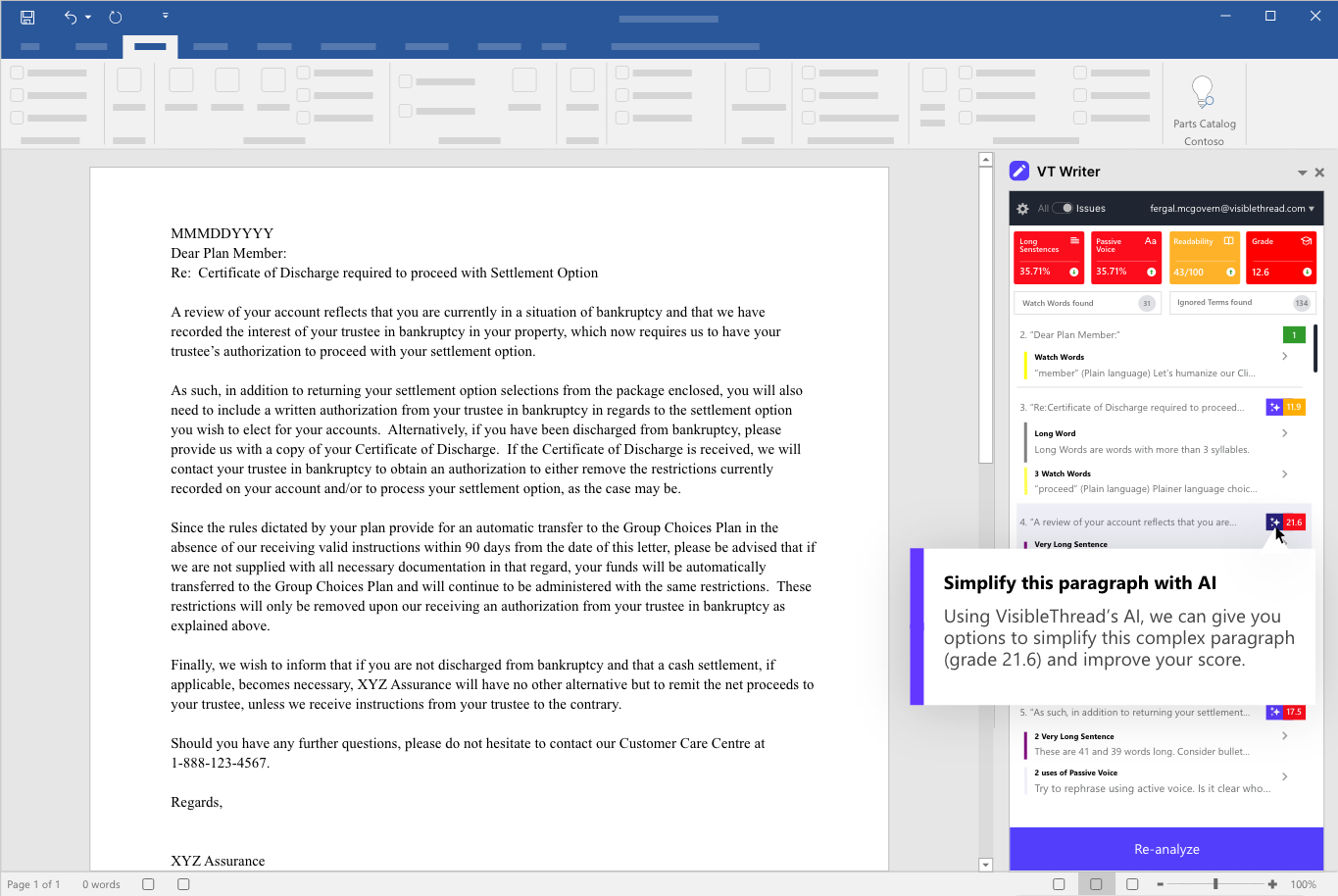

For example, an obvious extension to VT Writer is to help simplify overly complex paragraphs.

And since we score every paragraph for grade level, then it’s easy to spot paragraphs that need most attention. We can then ask the LLM to suggest ways to simplify poor quality paragraphs. And in fact, our research & engineering team is looking at this very area.

Here’s how it might look in action.

3. LLMs and confidential/proprietary data, opportunity and danger

The evolution of LLMs is happening at breakneck pace. We see two clear trends:

1.) Concern over public Cloud based LLMs: Increasingly, large organizations like Apple, Verizon, Samsung, JPMOrgan Chase, and Amazon have banned access to ChatGPT and cloud based LLMs generally.

Two core reasons, 1.) potential data breaches and 2.) sharing of confidential or proprietary data with the LLM.

And we know this only too well. VisibleThread customers in sectors like defense and aerospace, healthcare, government, and financial services use our products daily to analyze the most sensitive content. Most of our customers choose to host themselves, often in a private cloud. It is often not a question of technology, rather a question of compliance. For example, if you are a US defense contractor working on next generation technology, you must use software that can run completely isolated in a SCIF environment.

These are completely isolated, locked down environments, where our software is running 100% behind the firewall. Our products often analyze classified information requiring the highest security clearance.

Cloud LLMs are unsuitable in these environments.

2.) Private LLMs are proliferating. Every week there are new LLMs appearing. And they have comparable quality to GPT. This means that vendors like us, who support customer-hosted deployment options may soon be able to package and deploy their own LLM without needing to rely on cloud-based models like GPT or PaLM2.

That’s something that we at VisibleThread are very excited about. A huge part of why 11 of the top 15 US Government contractors use us is because of our ability to deploy fully secure, and 100% isolated.

In summary, our position

So, where does this all get us to?

Our position is simple.

- We will take advantage of the amazing capabilities of LLMs, but to augment our products and for the right use cases and avoid hallucinations.

- We will not use public cloud based LLMs like GPT 4 or PaLM 2 as part of our products.

- We will explore using an LLM that we can distribute as part of our products. If successful, this will run with no connection to the open internet. It will be fully isolated to protect the integrity of our customers data.

This means that existing and future customers can be assured of 100% control on their data, without any fear of data breach or leakage of confidential data.

This is a very exciting time for the industry and VisibleThread. More to come. Stay tuned!

PS: All the above is current thinking (June 2023). As with many things in life, things change. After all, just 8 months ago, nobody had ever heard of ChatGPT! We live in exciting times.

PPS: Just for kicks, I used VT Writer to score this blog post. It came in at grade level 7.1. No hallucination there.😊